1. Introduction

Modern microprocessors are designed by the complex architectures. The methods of the computing systems theory are applied to research the specifics of the systems. The most reliable results might be obtained by the experiments on the computing systems functioning under the real or close to real conditions. High complexity of the real computer architectures makes the research process very hard.

2. Discussed problem

The perspective method of the simulation is the method which is based on the functional specification of the system presented in the form of algorithm called as simulation model. The program contains the procedures that imitate the states of the system and the processes for evaluation of the system requirements. Simulation models reproduce the work of the system according to the foregone properties of the elements which models in their turn are also combined into corresponding structure. Such approach allows research the systems of any complexity considering the impacts of different factors and reproducing the most typical situations. Simulation models give the chance to the experimenter and the developer to form ideas of properties and, studying system through its model, to make reasonable design decisions. Important feature of the described method is use the animation which provides presentation and confirms reliability of modelling experiments.

The correct choice of parameters and attributes which should be used to describe the structure and the possible states of the objects inside the model is the core problem of its development. The chosen attributes should provide the basic properties of computers’ functioning on the one hand and to reduce the number of secondary factors which complicate the perception of the model on the other hand.

3. Problem solution

The number of problems had been solved:

1) Choosing the basic elements of the system which have to be displayed in the model;

2) Definition the specification level of the object parameters;

3) Assessment of the model adequacy.

During for the solution of the first problem as the research objects were chosen:

- a) The pipeline — main central processors part;

- b) The simplified structures of the most widespread processors types: superscalar and EPIC.

It was necessary to display the basic elements of the systems which define the features of their functioning. For example, the pipelining principle is widely used. So simulation models contained the one and multiple pipelines were developed. The last allows investigating features of several short and long principles collaboration.

The choice of the objects parameters was the other problem in developing models. They have to provide explanation of the main functioning features of modern processors. Thus, it is necessary to reject the minor factors complicating perception.

Described approach led to use of the simplified models of systems. Models contain minimum quantity of elements which is enough for explanation of object work. So, the research of CPU should start with the elementary pipe which is basic elements of their structure. Superscalar and EPIC processors models are more complex. Thus they should include only those elements that reflect the most important aspects of their work.

4. The description of simulation models for research the processors

The described approach is realized in a complex for the modern central processors research. It represents the software package. The package allows research the features of the processors organization. It provides an assessment of temporary characteristics. The complex includes toolkits for research of the following standard elements and systems:

- Pipes with and without conflicts;

- Parallel pipes of different size with and without conflicts;

- Super-scalar and EPIC processors.

It is expedient to start researches with the elementary devices and models. The first model is devoted to research of the standard five-step pipeline and detection of its main properties and the effective modes. It is known that the pipeline principle of processing is widely applied in modern processors [4].

Basic data for modeling are

- Total number of the instructions processed by the pipe;

- Duration of each micro operations;

- Conflict existence (pipeline «bubble»).

Modelling results are following:

- Total executed by the pipeline ticks;

- Average time of instructions

The pipeline model demonstrates that with growth of number of instructions their average time leads to the increase of its average execution time close to the time of one micro operation. If there are the different micro operation durations it aspires to the longest of them.

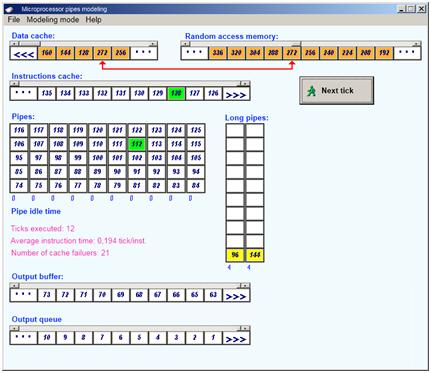

Another model the dependency between the number of pipes and productivity of the system can be researched. The executed program is submitted as mix of instructions which properties can be set by the researcher. It may contain the same instructions, and also the instructions executed different (long and short) pipes.

Basic data for modeling are:

- Number of instructions of different types in the modeled program;

- Number of different types pipes;

- Existence of the different type’s conflicts and their frequency.

Modelling results are following:

- Total number of the executed ticks;

- Average instructions time in ticks per instructions;

- Number of cache failures at inclusion memory instructions.

Thus it is shown that ideally n pipes can execute n commands for a tick.

The efficiency of the pipe can be affected by possible conflicts classified as the following:

- structural caused by the busyness of alone resources when hardware can’t support instructions execution with overlapping;

- data conflicts appearing when execution of one instructions depends on the result of the successive one;

- control conflicts that happen due to jump operations changing the value of instructions’ counter.

All conflicts terminate the instructions execution inside the pipeline stall and all the following till the end of the pipe [1]. This situation is called “pipeline bubble”. The bubble goes through the whole pipe without any job. The models implemented in the toolkit allow researching all types of conflicts and evaluating their impact on system productivity.

The example of the user interface demonstrating the work of multi-pipe system is presented at Fig.1.

Fig. 1. Multi-pipe system model

There are three main classes of central processors: super-scalar, with Very Long Instruction Word (VLIW) and Explicitly Parallel Instruction Computing (EPIC) [2]. They all contain several parallel pipes. In super-scalar computers conflicts are resolved dynamically in the process of program execution while in VLIW by the compiler before the program starts. EPIC combines the benefits of both methods. Its compiler forms the plan for task execution that can be adopted to any processor model where data and structural conflicts are resolved by the compiler while control collisions are eliminated during the program execution.

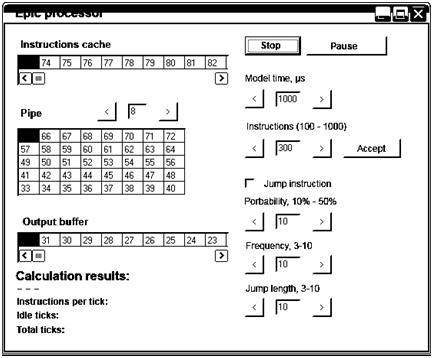

The model of the EPIC processor displays the pipe operation under the control conflicts. Basic data for modeling are:

- Total number of processor instructions;

- Existence and frequency of jump (“If”) instructions;

- Number of pipes.

Modelling results are following:

- Number of the instructions per tick;

- Pipe idle ticks;

- Total of the executed ticks.

The example of program interface for research on EPIC processor is presented in the Fig. 2.

Fig. 2. EPIC processor model

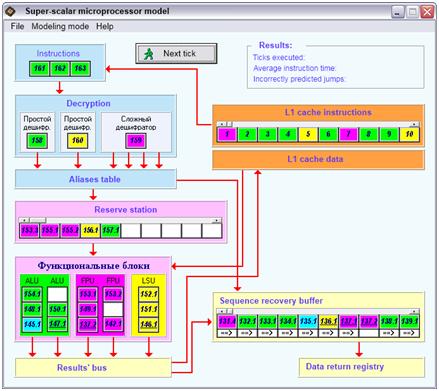

Most modern processors including multi-core are super-scalar with complex structure and operating modes. The developed toolkit deals with the typical super-scalar processor reflecting the work of the basic elements to demonstrate the principles of its work.

Basic data for modeling are:

- Total number of processor instructions;

- Existence and frequency of the pipe conflicts;

- Total number and frequency of integer, floating point and jump instructions.

Modelling results are following:

- Total of the executed ticks;

- Average instruction time;

- Number of incorrectly predicted transitions.

The example of screen form with the model of super-scalar processor is presented in Fig. 3.

Fig. 3. Super-scalar processor model

The software package contains four models of standard microprocessors and their units. The number of problems had been solved while developing models:

1) Choosing the basic elements of the system which have to be displayed in the model;

2) Definition the specification level of the object parameters;

3) Assessment of the model adequacy.

During for the solution of the first problem as the research objects were chosen:

- a) The main central processors part — pipeline;

- b) The simplified structures of the most widespread processors types: superscalar and EPIC.

It was necessary to display the basic elements of the systems which define the features of their functioning. For example, the pipelining principle is widely used. So simulation models contained the one and multiple pipelines were developed. The last allows investigating features of several short and long principles collaboration.

The choice of the objects parameters was the other problem in developing models. They have to provide explanation of the main functioning features of modern processors. Thus, it is necessary to reject the minor factors complicating perception.

- Conclusions

Described approach led to use of the simplified models of systems. Models contain minimum quantity of elements which is enough for explanation of object work. So, the research of CPU should start with the elementary pipe which is basic elements of their structure. Superscalar and EPIC processors models are more complex. Thus they should include only those elements that reflect the most important aspects of their work.

Simulation models are developed with use of universal environments (Delphi and C ++). Their important feature is an animation of the processes proceeding in devices. It provides the maximum presentation and the optimum mode of research.

References:

- Orlov, S., Efimushkina, N. Computer systems organization: educational grant. – Samara: Samara State Technical University, 2011.

- Stallings, W. Operating Systems: Internals and Design Principles. Pearson Education, 2011.

- Tanenbaum, T. A. Structured Computer Organization (6th Edition). Prentice Hall, 2012.[schema type=»book» name=»SIMULATION MODELS FOR THE CENTRAL MICROPROCESSORS RESEARCHING » description=»The paper describes one approach to research the modern microprocessors. It is developed with application of a simulation modeling method and allows investigating operation of the central processors pipeline, as well as the processors in general. The sets of input and output data are given. The screen forms for the simulation models are shown. When developing models a choice of the basic elements structure, their parameters level and the models adequacy assessment problems were solved.» author=»Efimushkina Natalya V., Orlov Sergey P.» publisher=»БАСАРАНОВИЧ ЕКАТЕРИНА» pubdate=»2017-04-20″ edition=»ЕВРАЗИЙСКИЙ СОЮЗ УЧЕНЫХ_ 28.03.2015_03(12)» ebook=»yes» ]